A Great Place to Upskill

Company

Get the latest updates from Product Space

© Propel Learnings

A Great Place to Upskill

Company

Get the latest updates from Product Space

There are two kinds of product managers working with AI right now.

The first group uses AI when they remember to. They paste a question into ChatGPT, get an answer, and move on. Occasionally they use it to polish a document or summarize a Zoom call. It saves some time. It feels modern. But nothing about how their team operates has fundamentally changed.

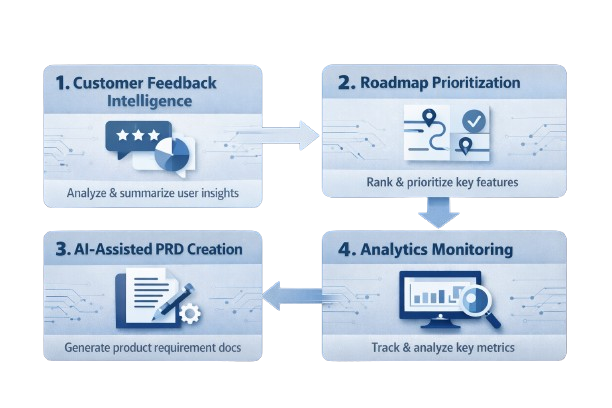

The second group has done something different. They've redesigned how their team works. Discovery, documentation, prioritization, analytics monitoring: all of it runs with AI embedded in the process. These PMs aren't more productive because they use AI more often. They're more productive because they've rebuilt their operating model around it.

That gap is widening in 2026, and most teams are on the wrong side of it.

.png)

The product management role has expanded in scope faster than any single person can absorb. Most PMs today are managing multiple product lines, coordinating across engineering, design, data, and marketing, maintaining a coherent long-term strategy, and shipping features on compressed timelines. All simultaneously.

The data volume alone is overwhelming. Thousands of support tickets, NPS responses, usage metrics, competitor moves, stakeholder requests, and backlog items arrive continuously. Processing all of it through traditional workflows is no longer realistic.

The teams still doing it manually aren't keeping up. They're making roadmap decisions based on incomplete signals, catching product issues after users surface them publicly, and writing documentation that's perpetually behind. Not because the PMs aren't talented. Because the volume has outpaced the method.

AI doesn't just speed up the old method. It makes a fundamentally different method possible.

Not all AI tools are the same, and the distinction matters when deciding what to build or adopt.

AI Assistants respond to direct prompts. You ask, they answer. Useful for one-off tasks, but entirely reactive. The assistant has no context about your workflow, your product, or what happened yesterday. Most teams are stuck here.

AI Copilots are embedded in your workflow. They monitor what's happening, surface relevant information proactively, and flag issues before you think to ask. Think of a colleague who reads the same data you do and brings the important things to your attention without being asked. Copilots are appearing inside analytics platforms, roadmap tools, and feedback systems.

Autonomous Agents plan, decide, act, and report back. You review the output rather than driving every step. Agents can use multiple tools, complete multi-step tasks, and operate across a workflow with minimal supervision. This is still emerging for most product teams, but it is closer than most people realize.

The leverage for product teams right now is in moving from assistant to copilot. Autonomous workflows are the next stage to prepare for.

Autonomous workflows are not the same as automation, and confusing the two leads to underinvestment in the right places.

Automation is rules-based. It executes predetermined logic: if this happens, do that. Creating a Jira ticket when a form is submitted is automation. It's useful, but it cannot adapt, interpret, or make a judgment call.

An autonomous workflow does something genuinely different. It reads 500 unstructured support tickets, identifies patterns across them, drafts a feature spec, and routes it to the right team. It interprets. It makes decisions. It acts.

The maturity progression looks like this:

Most teams are in phase three. Phase four is where the compounding advantage begins.

An honest look at how PM hours are spent reveals a consistent pattern.

| What AI should own | What you should own |

| First draft of every PRD | Deciding what to build |

| Weekly analytics summaries | Interpreting what the data means |

| Competitor feature monitoring | Setting the strategy |

| Tagging and sorting feedback | Deciding what matters |

| Release notes from sprint data | Deciding what ships |

| Meeting recaps and action items | The relationships in the room |

The right column requires judgment, context, and experience. The left column requires time. When AI handles the left column, something more valuable than time savings occurs. The PM recovers the mental space needed to do the right column well. That is the compounding effect most teams underestimate.

Most product teams are making roadmap decisions based on a fraction of the feedback available to them. Support tickets, app reviews, NPS responses, community forums, and sales call transcripts arrive continuously. No PM can realistically read all of it, so teams rely on what surfaces through conversations or Slack. That is an incomplete and often unrepresentative signal.

An AI pipeline reads everything. It clusters feedback by theme, scores each cluster by frequency and sentiment, tracks how distributions shift over time, and delivers a weekly ranked view of your users' biggest pain points with representative examples attached. Patterns that would never appear in a manual review become visible.

Manual backlog scoring takes longer than it should and still feels subjective. Scoring models drift as meetings run long and context shifts.

AI applies frameworks like RICE or ICE consistently across an entire backlog, incorporating usage data, impact estimates, and engineering velocity signals. Every item gets evaluated against the same criteria. The PM reviews the ranked output, adjusts for context the AI doesn't hold, and moves forward with more confidence and less time invested.

Writing PRDs follows a format experienced PMs have replicated dozens of times. It is important work. It is also highly formulaic.

Give an AI a structured brief: the feature, the target user, the job to be done, the constraints. It produces a solid first draft. The PM edits and refines rather than writing under pressure from a blank page. The output is often better, because editing a well-structured draft is a cleaner thinking process than writing from scratch on a deadline.

Some teams have extended this further, using AI to generate user stories from acceptance criteria and translate raw feedback into structured feature requests.

Dashboard-based monitoring has a structural limitation: it only catches what you already knew to watch.

An AI monitoring layer observes all metrics continuously, learns normal behavior patterns, and alerts the team when something deviates meaningfully. Critically, it surfaces a hypothesis alongside the alert rather than just a data point. The shift from reactive detection to proactive, contextual alerting is one of the highest-value changes an AI system can introduce for a product team.

Prompt quality determines output quality. A vague prompt returns a generic result. A well-constructed prompt specifies the AI's role, provides context, defines the output format, and includes relevant constraints.

Customer feedback analysis:

PRD generation:

Backlog prioritization:

Over-reliance. When teams stop exercising critical judgment on AI outputs, the copilot becomes a risk rather than an asset. You need to remain expert enough in your domain to catch when the AI is wrong.

Hallucinations. Language models produce confident-sounding outputs that are sometimes factually incorrect. Any AI-generated insight that drives a significant decision should be validated against primary data first.

Poor data quality. AI does not fix a broken data pipeline. It amplifies whatever it receives. Data quality investments should precede AI workflow scaling, not follow it.

Adoption without buy-in. A technically excellent tool that the team doesn't trust is a failed implementation. Rollout requires training, visible examples of value, and clear guidance on when to trust outputs and when to override them.

Encoded bias. AI systems trained on historical decisions can quietly reinforce existing blind spots. Audit regularly which user segments are represented in your training data, and watch for patterns that systematically exclude certain groups.

Step 1: Audit your week. List every recurring task. Identify which ones require your judgment and which ones just require your time.

Step 2: Pick one workflow. Choose the task with the highest ratio of time consumed to judgment required. Feedback synthesis or first-draft documentation are the right starting points for most teams.

Step 3: Run it in parallel. Keep your existing process running while the AI workflow runs alongside it. Compare outputs over several weeks before replacing anything.

Step 4: Measure what changes. Track time saved, output quality relative to manual work, and team confidence in the tool. These numbers support broader adoption decisions.

Step 5: Build it into team infrastructure. Once validated, document the workflow, build it into standard operating procedures, and train the team. Personal productivity hacks do not scale. Team systems do.

AI will not replace product managers. The judgment, the stakeholder relationships, the strategic direction: none of that is changing.

What is changing is the operating model around those things. The analytical work, the documentation, the monitoring, the synthesis: all of it is moving toward AI-assisted and increasingly autonomous execution. The PM's role is shifting from primary producer of that work toward architect and supervisor of the systems that produce it.

The product managers who recognize that shift and design for it will compound their advantage over time. The ones who don't will find themselves doing by hand what their peers have already automated.

What part of your job requires your judgment and what part only requires your time

That gap is already opening. The question is which side of it you want to be on.

AI is reshaping how product teams operate. If you want to stay ahead, explore our programs designed specifically for product managers working with AI.