A Great Place to Upskill

Company

Get the latest updates from Product Space

© Propel Learnings

A Great Place to Upskill

Company

Get the latest updates from Product Space

There's a moment every product manager eventually hits.

You're three hours into synthesizing user interviews. You've read the same complaint phrased seventeen different ways. You're color-coding a spreadsheet that nobody will ever look at. And somewhere in the back of your mind, a quiet thought surfaces: This cannot be the highest use of my time.

It isn't. And soon, it won't be.

We're entering the era of agentic AI, systems that don't just answer questions but execute multi-step workflows autonomously. Tools like Claude and GPT-4 can already plan, draft, evaluate, and iterate. The next 24 months will see these capabilities bundled into specialized agents that run continuously in the background of every product team.

This raises a question that deserves an honest answer, not a reassuring one: What exactly happens to the product manager?

The short answer: the role doesn't disappear. It abstracts upward. PMs move from executing decisions to designing the systems that make decisions.

But let's be precise about what that means, because the honest version is more complicated than the optimistic one.

Before we talk about the future, let's look at what's happening now.

At companies like Notion, Figma, and Atlassian, product teams are already using AI to automate significant chunks of traditional PM work. Here's what that looks like in practice:

Research synthesis: A PM at a mid-size SaaS company recently fed six months of NPS verbatims, roughly 3,200 responses, into a Claude workflow with a structured analysis prompt. In 40 minutes, she had clustered themes, severity rankings, representative quotes, and a ranked list of opportunity areas. The same synthesis used to take her research team two weeks.

Experiment monitoring: Teams using tools like Statsig and Amplitude with AI layers on top are getting automated daily summaries of experiment status, automatic significance flags, and drafted result summaries. The PM's job in that loop has shifted from data retrieval to result interpretation and decision-making.

PRD drafting: With a well-designed prompt template, AI can produce a first-draft PRD, problem statement, user stories, acceptance criteria, edge cases, rollout plan, in under five minutes. The output requires significant refinement, but the blank page problem is gone.

None of this is science fiction. It's available today, with current tools, to any team willing to invest in prompt architecture.

What it means is this: the execution layer of PM work, the synthesis, the formatting, the first drafts, is becoming commoditized. Not eliminated. But dramatically compressed.

Let's be honest about something the optimistic takes tend to gloss over.

Some PM work will genuinely disappear.

Junior PM roles that are primarily about coordination, documentation, and synthesis are most at risk. The "PM as note-taker and ticket-writer" archetype, which unfortunately describes many associate PM roles, is already being compressed. Teams that previously needed three PMs to manage research, documentation, and stakeholder communication are discovering they can operate with two (or one) using AI augmentation.

This isn't comfortable to say, but pretending otherwise doesn't serve anyone.

The real question isn't whether AI will take some PM work. It's: which PM work survives, and what does it require?

The answer, when you look carefully, is that what survives is the work that was always the hardest and the most valuable, and the work that was never really about execution at all.

Here's the specific case for what remains deeply human, with the reasoning most blogs skip:

Strategic judgment under ambiguity. AI is excellent at optimizing for defined objectives. It is poor at deciding what the objective should be when the options are genuinely unclear and the tradeoffs are non-quantifiable. When Airbnb decided to pivot its entire product strategy toward "Live Anywhere" during COVID, that call required reading a cultural moment, understanding the company's specific risk appetite, and making a bet that couldn't be validated with data. AI can model scenarios. It cannot own the conviction.

Internal politics and power dynamics. Every PM knows that getting a feature shipped is often less about the quality of the PRD and more about understanding which VP is blocking it and why, which team is sandbagging their estimates, and how to sequence conversations to build unstoppable momentum. These are human systems with human motivations. AI can surface patterns in Slack sentiment. It cannot navigate a dysfunctional relationship between the Head of Sales and the CTO.

Ethical judgment with incomplete information. When Twitter's PM team was deciding how to handle COVID misinformation in early 2020, the "correct" answer couldn't be optimized. It required weighing free speech norms, public health obligations, advertiser relationships, user trust, and regulatory risk, all with contradictory signals and no clear framework. This is the kind of decision that requires human accountability, not just analysis.

Long-term narrative and taste. The products people love aren't just efficiently built, they have a point of view. They reflect accumulated decisions about what matters and what doesn't, what's worth the complexity, and what's too clever by half. That aesthetic and strategic sensibility is built over years of shipping, watching users, and caring deeply. It's the most human thing about product work, and it's the last thing AI will touch.

The pattern is clear: AI handles tasks where the objective function is well-defined. PMs handle the work where defining the objective is the work.

Here's the structural shift that's coming, described concretely.

The future of product operations isn't one AI assistant. It's a coordinated layer of specialized agents, each with a defined scope, running continuously and feeding intelligence upward to the PM who designed them.

Think of it as a product operating system, five agents, running in parallel, each handling a distinct workflow:

Agent 1: The Research Synthesizer

What it does: Ingests raw qualitative data, interview transcripts, support tickets, NPS verbatims, app reviews — and produces structured insight packages. Clusters themes, identifies severity, surfaces contradictions, maps jobs-to-be-done.

What it can't do: Decide which insight matters most given your current strategic context. That's yours.

Tools today: Claude with long-context processing, combined with a vector database for semantic search across historical research.

Agent 2: The Strategy Stress-Tester

What it does: Takes a strategic proposal and runs it through a gauntlet, pre-mortem simulation, competitive reaction modeling, second-order effects analysis, assumption auditing. Returns a structured risk brief.

What it can't do: Tell you whether the risk is worth taking given your company's risk appetite, your personal career stakes, or the political moment you're in.

Tools today: Claude with multi-turn reasoning and structured prompt templates.

Agent 3: The Experiment Engine

What it does: Monitors your dashboards daily. Flags anomalies. Drafts experiment hypotheses when significant metrics move unexpectedly. Designs A/B tests with proper statistical architecture. Tracks significance. Writes result summaries.

What it can't do: Interpret ambiguous results where multiple hypotheses are equally valid, or make the call to ship when the data is directionally positive but not conclusive.

Tools today: Claude or GPT-4 connected to Amplitude or Mixpanel via API, with automated daily digest prompts.

Agent 4: The Sentiment Radar

What it does: Ingests Slack threads, sales call transcripts, support logs, and meeting notes. Detects recurring objections, cross-team friction signals, stakeholder anxiety patterns, and misalignment between what's being said in meetings and what's appearing in written communication.

What it can't do: Understand the context behind the sentiment. When the engineering team's Slack sentiment drops, is it the roadmap, a bad sprint, or a personnel issue? That requires human relationship knowledge.

Tools today: Claude with document analysis, piped from meeting transcription tools like Otter.ai or Fireflies.

Agent 5: The PRD Architect

What it does: Takes a structured feature brief and produces a complete first-draft PRD, problem statement, user stories, acceptance criteria, edge cases, success metrics, rollout plan, risk register.

What it can't do: Make the judgment calls baked into every PRD, what's in scope, what's explicitly out, which edge case is worth engineering complexity, what the acceptable error rate is. These are strategic decisions dressed as documentation choices.

Tools today: Claude with a carefully designed PRD prompt template, iterated over multiple rounds.

Individually, each of these agents saves hours. Coordinated together, they create something more significant: a product intelligence layer that runs continuously, surfaces what matters, and dramatically compresses the time between signal and decision.

Someone has to design that layer. Someone has to decide what questions each agent is asking, what outputs feed into which decisions, where human review is required versus where it can be automated, and how to detect when an agent is producing confidently wrong output.

That someone is the PM.

Here's a concrete picture of what this shift looks like in practice.

7:00 AM. Your Research Synthesizer has run overnight on the week's support tickets and NPS responses. You open a two-page insight brief over coffee. Three themes are flagged as new or escalating. You add two to next week's discovery agenda and dismiss one as a known issue already in the roadmap.

9:00 AM. Your Experiment Engine has flagged that your onboarding experiment is approaching significance two days early. The automated summary shows the variant is winning on activation but losing on Day-7 retention — a classic engagement/quality tradeoff. You spend 45 minutes in the data, form a hypothesis about why, and decide to extend the experiment to resolve the ambiguity. The agent drafts the extension brief. You edit three sentences and send it.

11:00 AM. You're writing a strategy memo for leadership. Your Strategy Stress-Tester has run the proposal through a pre-mortem and returned a risk brief. The most dangerous assumption is one you hadn't fully articulated to yourself. You spend an hour strengthening that section of the memo.

2:00 PM. Your Sentiment Radar has flagged unusual friction language in the last three sales-engineering syncs. The pattern suggests the enterprise roadmap commitments from Q2 are creating pressure the current sprint plan can't absorb. You set up a conversation with the sales lead to understand the scope before it becomes a crisis.

What you didn't do today: manually synthesize data, write a PRD from scratch, read every support ticket, or build a dashboard. What you did do: make five consequential judgment calls that only you could make, because only you hold the full context of your product, your team, and your company's strategic moment.

That's the day of the Autonomous PM. Not automated. Amplified.

The skills that make this future work are different from what most PM training programs teach. Here's the honest skill gap:

Prompt architecture. Not just writing good prompts, but designing prompt systems, templates with structured inputs, defined output formats, explicit constraints, and built-in quality checks. This is closer to system design than to writing.

Workflow design. Understanding how to decompose a complex PM task (e.g., "synthesize a quarter of research") into a sequence of AI-executable steps with defined handoffs, human review checkpoints, and failure modes. Think of it as process engineering for intelligence workflows.

Evaluation and quality control. AI produces confident-sounding output that is sometimes confidently wrong. PMs who use agents at scale need calibrated skepticism, the ability to spot when an output looks right but isn't, and to design systems that surface errors before they propagate into decisions.

Incentive and constraint design. Every agent prompt is a set of objectives and constraints. If you design them poorly, you get outputs that optimize for the wrong thing, summaries that miss important but rare signals, PRDs that address stated requirements but miss the underlying user need. This is the same muscle as designing good metrics, applied to AI system design.

None of this requires becoming an engineer. All of it requires PMs to think more systemically about how decisions get made, which, if you're being honest, is what great PMs were doing anyway.

Every significant technological shift in the knowledge economy has followed the same arc.

Calculators didn't eliminate mathematicians, they freed them from arithmetic and elevated them toward theoretical work. Spreadsheets didn't eliminate finance professionals, they eliminated the analysts who only did calculations and elevated the ones who did interpretation. Modern frameworks didn't eliminate engineers, they commoditized boilerplate implementation and created leverage for architects.

In each case, the roles that survived weren't the ones that fought the abstraction. They were the ones that moved with it, taking the new floor as their new starting point.

AI is doing the same thing to product management. The floor is rising. The execution layer is being automated. The strategic and judgment layer, the part that was always the hardest and most valuable, is becoming the entire job.

That is not a threat to good product managers.

It is a elimination of the parts of the job that were always beneath them.

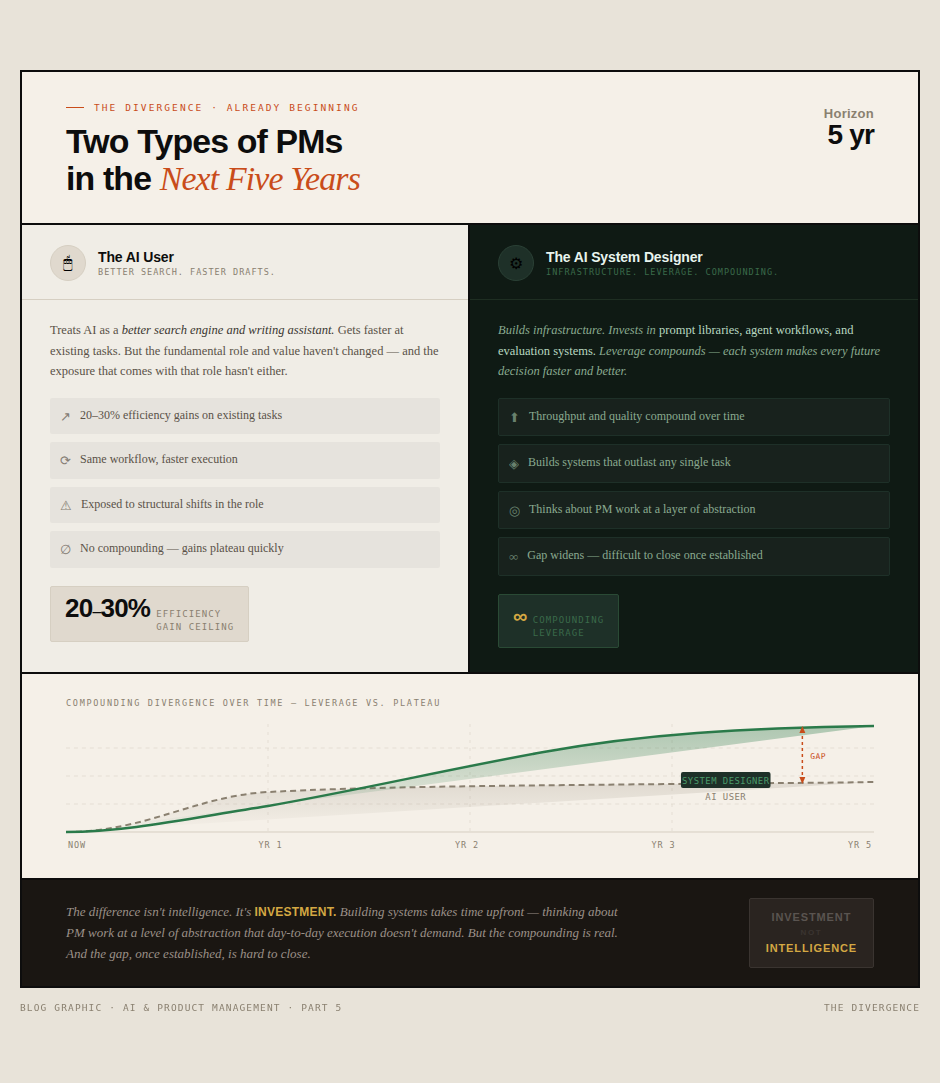

Here's the divergence that's already beginning:

The AI User treats AI as a better search engine and writing assistant. They get 20-30% efficiency gains. They're faster at existing tasks. But their fundamental role and value haven't changed. They remain exposed to whatever happens to that role.

The AI System Designer builds infrastructure. They invest time in prompt libraries, agent workflows, and evaluation systems. Their leverage compounds, each system they build makes every future decision faster and better. After a year, they're operating at a level of throughput and quality that's genuinely difficult to match without comparable infrastructure.

The difference isn't intelligence. It's investment. Building systems takes time upfront. It requires thinking about PM work at a level of abstraction that most day-to-day execution doesn't demand.

But the compounding is real. And the gap, once established, is hard to close.

If you want to move from AI user to AI system designer, the path is concrete:

Week 1–2: Pick one recurring PM task you do every week that involves synthesis or drafting. Design a prompt template for it. Run it ten times. Refine it until the output consistently meets your standard.

Month 1: Build your first mini-workflow, a two-step process where the output of one AI step feeds the input of another. Research synthesis that feeds into opportunity ranking is a good starting point.

Month 2–3: Add a monitoring loop. Design a weekly agent that ingests one source of ongoing signals (support tickets, NPS, experiment results) and produces a standing brief you review every Monday.

Month 4–6: Build the stack. Connect the pieces. Create the product intelligence layer for your specific product and team context.

Each step surfaces things that don't work, which is how you learn what actually works. The goal isn't a perfect system. It's a system that's better than what you had before, that you understand well enough to improve.

The PM role is changing. Some of what we've called product management work, the coordination, the documentation, the synthesis, is going to be done by machines. That part is real, and it's worth taking seriously rather than dismissing.

But the work that makes the difference, the judgment, the taste, the navigation of human systems, the ownership of outcomes, that's not going anywhere. If anything, it's becoming more visible, because the execution that used to obscure it is being stripped away.

The Autonomous PM isn't a PM who gets replaced by automation.

It's a PM who builds the automation, and then does the work that was always worth doing.

Not automated. Elevated.

The shift is already underway. The question is whether you're designing the system or waiting to see what it does to you.

Here's What It Actually Is, What It Can Do Today, and Why Product Managers Should Pay Attention

.png&w=1200&q=75)

200 battle-tested Claude prompts for PMs, covering strategy, research, PRDs, metrics, and stakeholder comms, plus 10 production-ready API code snippets.

A deep guide for product managers on designing AI features that improve UX without breaking trust, clarity, or user control. Practical frameworks and patterns included.